The approval

A loan application arrives. The model says 90% — approve. The loan is approved. The borrower defaults. Forty-seven thousand dollars.

The model was not malfunctioning. It was doing exactly what it was asked to do: compress every piece of evidence it had about a borrower into a single number between zero and one, and return that number. The number said 90%. The officer read 90% as certainty. The borrower defaulted anyway.

This is not a story about a bad model. The model's overall accuracy was 78% — a standard result for the German Credit dataset, a benchmark that has been used in credit risk research for decades. This is a story about a bad answer. Not wrong in the sense that the model miscalculated. Wrong in the sense that the answer was the wrong kind of thing to give.

The wrong question

When a model returns a confidence score, it is answering the question: how sure are you?

This is the wrong question. Not because certainty does not matter, but because the question treats all situations as the same kind of situation, differing only in degree. Ninety percent sure it is benign. Ninety percent sure it is one of three things. Ninety percent sure despite having almost no evidence. These are not the same situation. They are not even the same kind of situation. But the score is the same.

A doctor in an emergency room faces a patient. After examination, three scenarios:

The symptoms clearly indicate a fracture. There is one answer, and the evidence is decisive. The doctor acts.

The symptoms are consistent with two conditions — a fracture or a severe sprain. The evidence narrows the field but does not decide. The doctor orders an X-ray.

The symptoms are unlike anything the doctor has seen. The evidence is insufficient to narrow the field at all. The doctor calls a specialist.

These are three different epistemic situations, and they produce three different actions. The doctor does not treat them as points on a scale from zero to one hundred. She treats them as qualitatively different. Because they are.

What the score collapses

A confidence score collapses these situations into a single axis. It takes the structure of the evidence — how it is distributed, what it supports, what it excludes — and compresses it into a number. The compression is lossy. What is lost is the shape of the situation.

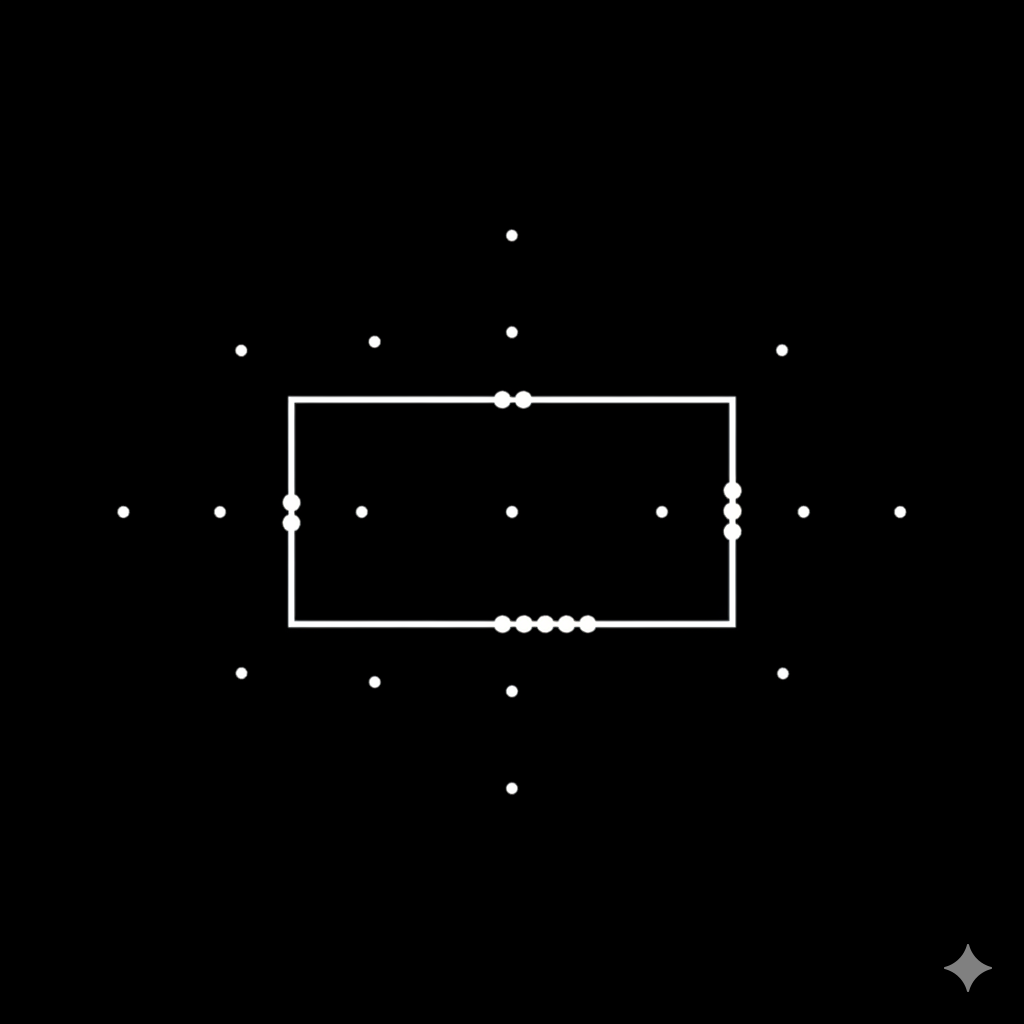

Consider what the evidence actually looks like before the compression.

In the first case, the evidence overwhelmingly supports one class. The margin between the best-supported answer and the next is large. No plausible alternative exists. This is not just high confidence — it is a situation where one answer has been singled out by the evidence.

In the second case, the evidence supports two or three classes. None has a decisive margin. The model cannot commit to one answer, but it has narrowed the field. This is not low confidence — it is a different kind of result entirely, one that carries useful information (the shortlist) that a single number destroys.

In the third case, the evidence is thin. No class has meaningful support. The model is not uncertain about which answer is right — it has no basis for any answer. The evidence did not narrow the field at all.

A confidence score maps all three to positions on a line. The first might be 92%. The second might be 62%. The third might be 59%. The numbers are close — within the same band that most operators treat as "moderately confident." But these two cases are structurally different.

At 62%, the evidence might look like this: seven rules fired for class A, five for class B, one for class C. The model cannot pick a winner, but it has narrowed the field to two. The useful information — it is A or B, not C — is present in the evidence and destroyed by the score.

At 59%, the evidence might look like this: two rules fired total, one for class A, one for class B. The model has almost nothing to work with. The score is three points lower, but the situation is entirely different — not ambiguity between plausible options, but absence of evidence altogether. The first case warrants a shortlist. The second warrants escalation. The score says they are nearly the same.

The score preserves the ordering and discards the structure. The operator sees a number. The number hides the only thing that matters: what kind of situation the model is in.

The cost of guessing confidently

The collapse is not merely imprecise. It is dangerous, because it tells the consumer of the prediction what to believe without telling them what to do.

A confidence score says: the answer is probably A. It does not say: you can act on this. It does not say: you should review this. It does not say: you should not act at all. These are the decisions that matter — not what the model thinks, but what the operator should do — and the confidence score provides no guidance.

So the operator invents guidance. Above 70%, approve automatically. Between 40% and 70%, send to review. Below 40%, deny. These thresholds are drawn arbitrarily, and they are drawn the same way regardless of whether the model's 65% reflects genuine ambiguity between two options or total evidential poverty. The threshold cannot distinguish between a model that has narrowed the field and a model that is guessing. The operator cannot either. The score does not carry the information needed to tell them apart.

In credit risk, this means loans approved on confident guesses. In medical triage, patients routed to routine care on ambiguous evidence. In manufacturing, defective parts shipped because the model committed when it should have abstained.

The cost is not borne by the model. The model was asked for a number and it returned one. The cost is borne by the person who trusted the number to mean something it was never designed to mean.

Three types, not a score

There is a different kind of answer. Not a better number — a different structure.

Instead of asking the model how sure are you?, ask: what kind of situation is this? The answer is one of three types.

The evidence singles out one class. The margin is decisive. One answer has won. This is a commitment — the model knows, and you can act on it.

The evidence supports a small number of classes. No single winner, but the field has been narrowed. This is a partial result — the model has done useful work, but the final decision requires something the model does not have. A human, a second test, additional data.

The evidence is insufficient. No class has meaningful support. The model has nothing to offer. This is an honest declaration of ignorance — the rarest and most valuable output a classifier can produce, because it routes the case to someone who can actually help before damage is done.

These are not confidence levels. They are not thresholds drawn on a score. They are structural properties of the evidence itself, determined by how the evidence is distributed across classes — whether it converges, narrows, or fails to discriminate. The type is not a number repackaged. It is a different measurement.

The three types produce three protocols. A commitment authorizes automatic action. A partial result authorizes assisted decision-making with a shortlist. A declaration of ignorance authorizes escalation. The operator does not need to invent thresholds. The classifier has already told them what kind of answer it is giving, and what kind of response that answer warrants.

What honesty looks like in a classifier

Every classifier is an instrument — a way of looking at a sample and producing a judgment. Like every instrument, it has edges: cases it handles well, cases it cannot resolve, cases where the evidence is simply not there.

A confidence score hides the edges. It returns a number regardless of whether the instrument is operating within its range or beyond it. A model that is guessing and a model that knows produce outputs on the same scale, indistinguishable in kind. The instrument does not report its own limits. The operator cannot see them.

Typed uncertainty exposes the edges. When the evidence is insufficient, the classifier says so — not by returning a low number, but by returning a different kind of answer. The operator sees not just what the model thinks, but what kind of thinking produced the result. Was it decisive? Was it partial? Was it empty?

The model does not become more accurate. It does not know more. It becomes honest about what kind of answer it is giving. And honesty about the kind of answer turns out to matter more than precision about the degree.

What we built

We ran this approach against the German Credit dataset — 1,000 loan applications, 5-fold cross-validation, the same benchmark that produces the 78% accuracy figure. The model with typed uncertainty did not achieve a higher overall accuracy. It achieved a different kind of result: when it committed — when it returned CERTAIN — it was right 95.7% of the time. And the 43 applications that a standard classifier approved with high confidence and that later defaulted? Every one of them was flagged as PARTIAL or UNCERTAIN. Not by a better model. By a model that could say I don't know this one instead of probably yes.

The results convinced us that this should not stay inside a research lab. A classifier that can declare its own limits is too useful to exist only in benchmarks.

We built it into a production API called Tercet. Every prediction returns a type — CERTAIN, PARTIAL, or UNCERTAIN — determined by the structure of the evidence, not by a threshold on a score.

But the deeper point is not the tool. It is the question the tool asks. A confidence score answers how sure are you? A typed prediction answers what kind of answer is this? The second question is harder to ask, because it requires the instrument to report on itself — to classify its own output before handing it to the operator. But it is the question that matters, because it is the question the operator actually needs answered before they can act.

The loan officer does not need to know that the model is 90% sure. She needs to know whether this is the kind of case where she can trust the model at all.